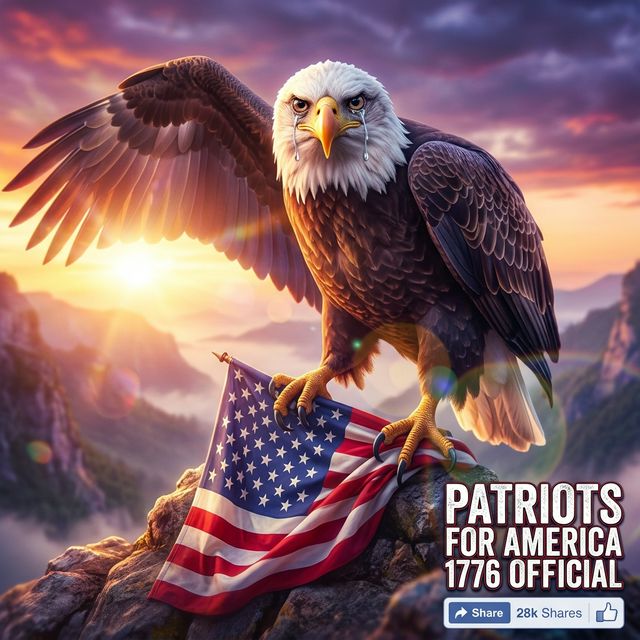

The eagle is weeping. This must be established at the outset, because the tears are the first thing the viewer encounters and the last thing the image explains. They are large, symmetrical, and catch light from what appears to be two separate suns — a celestial arrangement the paper's science correspondent, if the paper had one, would be obliged to investigate. It does not. The tears will have to speak for themselves.

The specimen — recovered from the Facebook account "Patriots For America 1776 Official" on the morning of March 14th, 2026, and shared approximately fourteen thousand times before the paper became aware of its existence — depicts a bald eagle of uncertain emotional state clutching an American flag in talons that number, upon close examination, seven. The flag itself contains fifty-three stars, arranged in a pattern that suggests the system responsible for its generation has a working relationship with American iconography but not, in any binding sense, with American history.

The account that posted the specimen has published, in the preceding ninety days, four hundred and twelve images of comparable character. Each depicts a patriotic subject. Each contains at least one anatomical or historical error. None has been corrected. The account's bio reads, in full: "We Stand For What Is Right." What is right, in this context, appears to include a seventh talon.

The paper does not speculate on the emotional life of eagles, artificial or otherwise. It notes only that the tears, which fall in perfectly symmetrical tracks down both sides of the beak, bear no relationship to any documented avian behavior and a considerable relationship to the visual language of sentimental greeting cards, a genre the system has evidently studied with more diligence than it has studied ornithology.

Full article →